Search visibility is no longer controlled only by traditional search engines. In 2026, large language models (LLMs), AI assistants, and autonomous crawlers increasingly read, interpret, and retrieve content directly from websites to generate answers, recommendations, and summaries.

If your site is not accessible to these systems, it risks becoming invisible—regardless of how strong your traditional rankings may be.

This guide explains how to make a genuinely AI friendly website, focusing on crawlability, structure, and technical SEO that matter for both humans and machines.

Traditional search crawlers such as Googlebot are optimized around keyword matching and document-level ranking. They discover URLs, index pages, and evaluate relevance largely through lexical signals, links, and engagement metrics.

LLM engines and AI crawlers operate on a fundamentally different model. Instead of ranking pages, they perform semantic reasoning and synthesis, often using fan-out search—where a single prompt expands into multiple sub-queries that are evaluated and recombined into a final answer.

As a result, AI systems assess content through a meaning-first lens that changes how information is extracted and used:

This shift requires site SEO to support semantic clarity, structured interpretation, and retrievability, not just discoverability.

A truly website accessible experience is not only about human usability or compliance standards. For AI systems, accessibility means:

If AI systems cannot reliably parse your pages, they will not surface your brand in AI-generated responses.

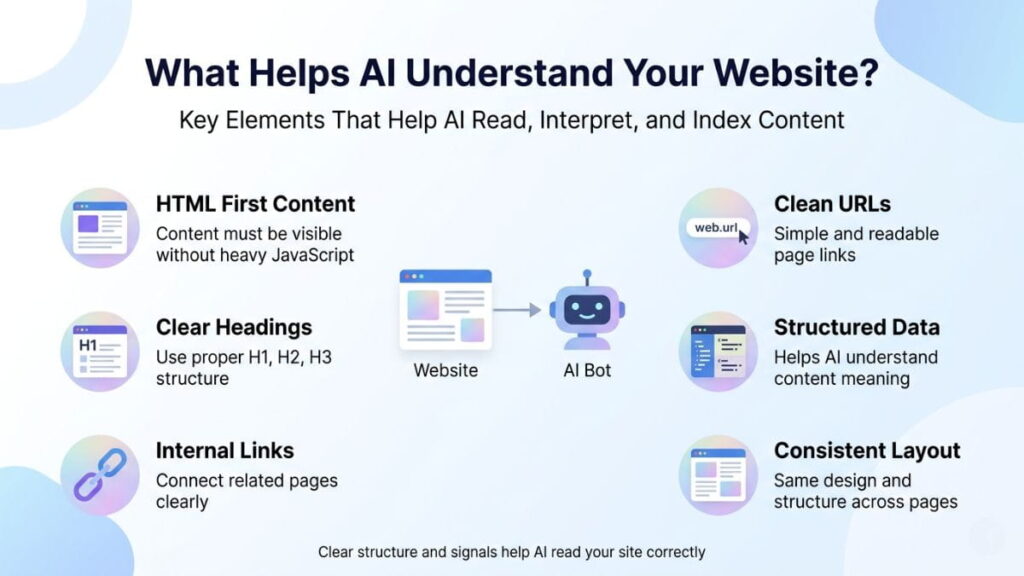

Several technical and structural components directly affect AI understanding:

AI systems rely heavily on structured data and consistency to determine relevance and authority.

Structured data acts as a translation layer between your content and AI systems. When implemented correctly, it helps AI models understand:

Combined with a clean site architecture like clear navigation paths, shallow page depth, and logical categorization, structured data dramatically improves crawlability and retrieval accuracy.

This is one of the most overlooked aspects of website optimization tools and modern technical SEO strategies.

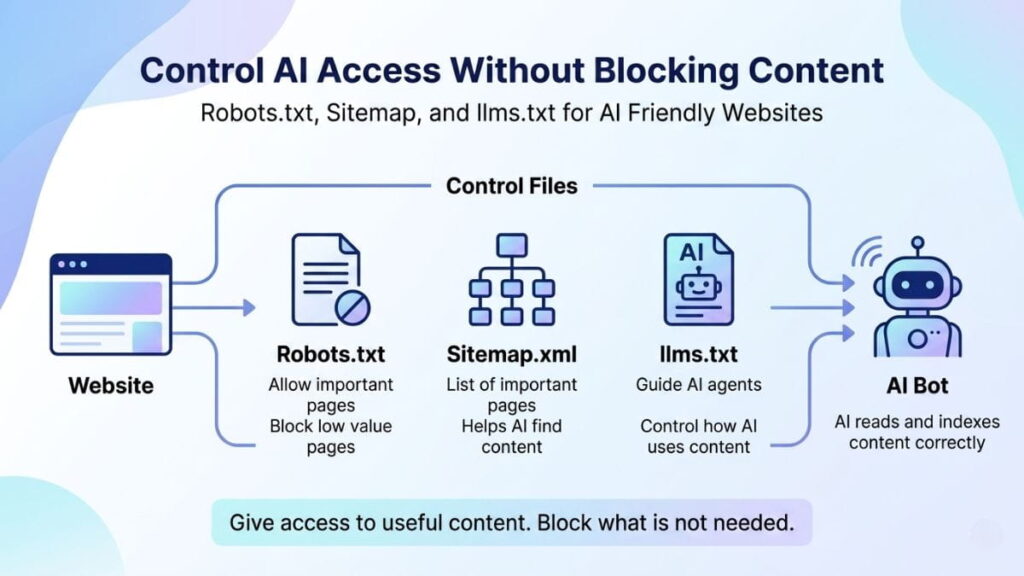

AI accessibility does not mean opening everything indiscriminately.

Misconfigured access files are among the most common technical issues that block AI systems from understanding websites.

You do not need a separate strategy—but you do need an expanded one.

AI-friendly optimization builds on strong technical SEO, but adds emphasis on:

If your site is optimized only for ranking and not for understanding, AI systems may ignore it—even if it ranks well today.

Some of the most damaging issues include:

These issues reduce both AI crawlability and long-term search resilience.

Making your website accessible to LLM engines and AI crawlers is no longer optional. It is fast becoming the baseline requirement for digital visibility in 2026 and beyond.

Brands that invest early in AI friendly website architecture, structured data, and clean technical foundations will gain more sustainable discoverability.

At Ooptiq, we help brands design and optimize websites for AI understanding, retrieval, and trust. As search evolves, so should your strategy.